Your content calendar is packed. You have writers, editors, and a publishing queue. The bottleneck is rarely the creation of the first draft. The real friction starts when that draft needs to move from a Google Doc to a live, optimized page on your site. This is where the fundamental operational choice presents itself: do you manage this process manually, with human oversight at every step, or do you introduce automation? The decision isn't about chasing the latest trend. It's a strategic calculation that impacts your team's capacity, your content's quality, and ultimately, your SEO performance. Choosing correctly requires understanding what you gain, what you risk, and where each approach truly shines in the messy reality of content operations.

The tension between automation and manual publishing isn't new, but the stakes are higher. Content velocity is a competitive metric, yet Google's emphasis on E-E-A-T demands nuance and expertise that machines struggle to replicate. A purely manual process can ensure that nuance but may limit your scale. Full automation can deliver scale but might dilute the very quality signals search engines reward. This article breaks down the practical, day-to-day realities of both models. We'll examine the workflows, pinpoint where automation creates leverage versus where human judgment is non-negotiable, and outline the common pitfalls teams encounter when they lean too far in one direction.

Defining the workflows: What "manual" and "automated" actually mean in practice

Imagine a writer finishing an article. In a manual publishing workflow, what happens next involves a series of deliberate, human-led handoffs. The document moves to an editor who reviews for voice, accuracy, and clarity. Once approved, it goes to a web producer or content manager. This person logs into the CMS, creates a new post, and begins the transfer. They copy and paste the text, but the job has just started. They must now manually format headings, insert and optimize images with alt text, craft the meta title and description, set the URL slug, add internal links to relevant pages, configure the correct categories and tags, and schedule the publication. Each step is a discrete action, often requiring context about the site's SEO structure and content strategy.

An automated publishing workflow reimagines this chain as a connected system. The finished, approved article resides not just as a document but as structured data. Through an API connection, a platform or custom script can ingest this content and push it directly to the CMS. The automation handles the repetitive, rules-based tasks: creating the post, applying the correct template, formatting the HTML, populating the meta fields from a predefined schema, assigning tags based on content analysis, and even publishing it on schedule. The human role shifts from executor to supervisor and configurator. They define the rules, set up the templates, and establish the quality gates the automation must pass through.

The manual touchpoints that resist full automation

Not every task is a good candidate for automation. The most effective hybrid models identify which elements require a human in the loop. Editorial judgment is the prime example. Can an algorithm detect a subtly misleading claim, a tone that feels off-brand for a sensitive topic, or a complex argument that loses logical coherence? Current technology suggests caution. Similarly, strategic internal linking often requires an understanding of the entire site's architecture and business goals that pure keyword matching can't replicate. Choosing the single most authoritative page to link to from a new article is a contextual decision.

Another area is exception handling. What happens when the automation encounters something unexpected? A source URL is broken, an image ratio doesn't fit the template, or a keyword target is missing. A manual workflow naturally pauses for a decision. An automated one will either fail loudly, stop silently, or worse, publish with a glaring error. Teams that automate successfully build robust exception reporting and designate clear human oversight for these edge cases. The goal isn't to eliminate people but to deploy them on higher-value problems.

Measuring impact: Speed, scale, and resource allocation

The most cited advantage of automation is speed. This is true, but the magnitude matters. For a team publishing a few articles a month, the time saved by automating publishing might be marginal. The manual process, while tedious, is manageable. The equation changes dramatically for teams producing dozens or hundreds of pieces monthly. The compounding time cost of manual formatting, meta tag entry, and scheduling becomes a significant drain on skilled personnel. Automation transforms publishing from a linear, time-consuming task into a parallelizable process. While a web producer can only manually publish one article at a time, an automated system can queue and publish ten simultaneously, limited only by server capacity and editorial approval gates.

This shift directly impacts resource allocation. In manual models, senior team members often get bogged down in execution. An SEO specialist might spend hours a week on implementation details inside the CMS rather than on strategy, keyword research, or technical audits. Automation liberates these high-cost hours. The specialist can instead focus on optimizing the templates and rules that govern the automation, effectively multiplying their impact. The return on investment isn't just in articles published per hour, but in the strategic value of the human hours recovered. Teams often find that after implementing publishing automation, they can scale content output without proportionally scaling headcount, or they can redirect existing headcount to more creative and analytical work.

The quality paradox: Can automated publishing uphold SEO and editorial standards?

Here lies the core anxiety for many content leaders. Automation is associated with volume, and volume is often (sometimes fairly) associated with a drop in quality. The critical insight is that the publishing mechanism itself does not determine quality; the inputs and guardrails do. A poorly written, thin article published manually will perform badly. A comprehensive, well-researched article published through a robust automated system can excel. The risk with automation is that it can make it easier to publish subpar content if the quality checks are not rigorously maintained upstream. The editorial bar must be set before the automation triggers.

For SEO, automation offers consistency, which is a key component of quality. A human might forget to add an image alt text one day or might format an H2 heading inconsistently. A properly configured automation applies the same standards every single time. It can ensure every post has a meta description, every image includes alt attributes, and every URL follows a clean, keyword-informed slug structure. This technical consistency is a baseline SEO hygiene factor that automation can guarantee. However, automation cannot judge whether the chosen keyword is the most strategic intent match or whether the meta description is compelling to humans. That requires strategic human input during the setup and periodic reviews.

Where manual oversight still wins for nuanced quality

Consider the final "preview" before hitting publish. In a manual process, a human visually scans the rendered page in the CMS. They might notice that a pull quote looks awkward on mobile, that a table has broken formatting, or that the featured image doesn't crop well. This last-mile QA is perceptual and contextual. Automated systems can run technical validation checks, but aesthetic and usability judgment remains a human forte. Furthermore, nuanced optimization, like tweaking an introduction for better readability or adding a last-minute internal link to a freshly published report, is a spontaneous, strategic act. It requires an understanding of the content's purpose that exists outside of a predefined rule set.

On the SEO front, manual publishing allows for on-the-fly adjustments based on very recent data or trends. If a competitor just published a major study, a human editor might immediately decide to reference and link to it within a relevant, scheduled article. An automated system, unless specifically programmed for real-time content ingestion, would not make that connection. This capacity for real-time strategic pivoting is a subtle but powerful advantage of having a knowledgeable human in the publishing driver's seat, especially in fast-moving verticals.

Common pitfalls and hidden costs of each approach

Choosing a path without anticipating its drawbacks leads to frustration. The pitfalls of manual publishing are often operational and gradual. The first is burnout. The repetitive nature of the work leads to fatigue and human error, which ironically compromises the quality the process was meant to protect. Missed alt text, typos in meta descriptions, and incorrect publication dates start to creep in. The second is the creation of a single point of failure. If the one team member who knows the CMS intricacies is out sick or leaves the company, the entire publishing engine can grind to a halt. Documentation is rarely as comprehensive as assumed.

The hidden cost is opportunity. The hours spent on manual publishing are hours not spent on content promotion, community building, or analyzing performance data. Teams can become so busy with the logistics of publishing that they neglect the activities that make published content successful. This creates a hollow velocity, lots of content going live, but little effort ensuring it reaches and engages its intended audience.

The automation traps: Over-reliance and integration debt

Automation introduces its own set of risks. The most significant is the "set and forget" mentality. Teams invest in an automated system, configure it once, and assume it will run perfectly indefinitely. In reality, CMSs update, website designs change, and SEO best practices evolve. An automation template built for today's page layout will break after a site redesign. Without ongoing technical maintenance, the automation becomes a source of errors, publishing content that looks broken or is missing new required fields. This creates integration debt, the compounding cost of maintaining connections between systems.

Another trap is automating a bad process. If the pre-publishing editorial workflow is disorganized or lacks clear approval gates, automation will simply amplify the chaos, pushing inconsistent and unvetted content live faster. The initial setup cost is also a factor. Building reliable API integrations, creating robust templates, and defining exception-handling protocols requires upfront investment in developer time or platform costs. For small teams with limited technical resources, this initial hurdle can be prohibitive, locking them into a manual process by necessity rather than choice.

Finding the hybrid model: A practical framework for content teams

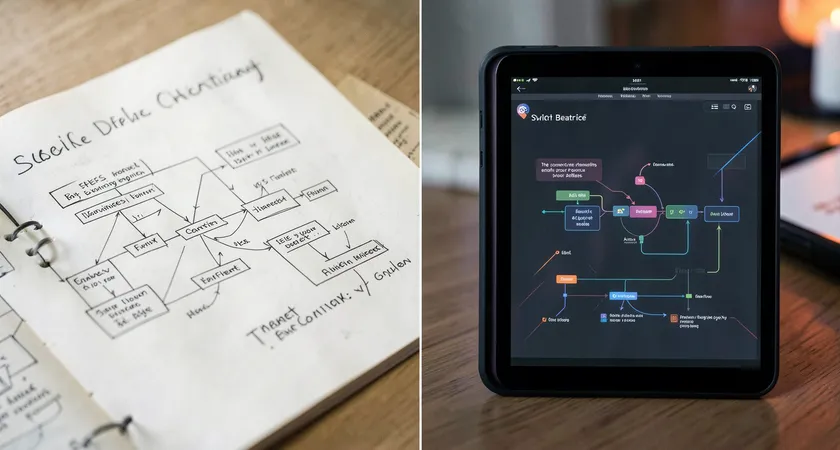

The binary choice between fully manual and fully automated is a false one for most professional teams. The winning strategy is a deliberate hybrid that plays to the strengths of both. This starts with a ruthless audit of your current publishing workflow. Map out every single step from final edit to live page. For each step, ask two questions: "Does this require human judgment or creativity?" and "Is this step rule-based and repetitive?" The items in the second category are your primary candidates for automation.

A typical effective hybrid model looks like this. Content creation and final editorial approval remain thoroughly human-driven processes. The output of this phase is a structured document that includes not just the body text but also designated fields for meta data, target keywords, and image references. This package is then handed off to an automated system. The automation handles the technical lifting: creating the CMS entry, applying formatting, populating the meta fields, scheduling the post, and generating basic image alt text from provided descriptions. The system then triggers a notification to a human for the final review. This person checks the live preview, ensures formatting is correct on all devices, and adds any final strategic touches, like a specific internal link or a social media message, before approving the automated system to publish.

This model balances efficiency with control. It removes the grunt work, ensures consistency, but retains human oversight at the most critical junctures for quality and strategy. The key to making it work is having clear definitions and responsibilities. The editorial team must understand how to prepare content for the automation (e.g., using specific heading styles, providing image metadata). The developer or martech manager must maintain the automation's health. And a content or SEO lead must own the rules and templates that govern the process, regularly updating them based on performance data and changing goals.

The debate between automation and manual publishing isn't about which is universally better. It's about fit. For a small team publishing deeply researched, long-form content where every nuance matters, a heavily manual process with light automation for scheduling might be optimal. For a larger team running a content hub that demands consistent output across many product lines, a robust automated pipeline with strong human gates is likely the path to sustainable scale. The wrong move is to remain static. As your content ambitions grow, the purely manual model will crack under pressure. And as search engines get smarter, a purely automated model that lacks human insight will fail to resonate.

The practical next step is to conduct your own workflow audit. Time how long publishing actually takes, track the errors that occur, and calculate the opportunity cost of your team's current effort. This data will point you toward the right balance. For many organizations, navigating this shift, especially the technical integration and process redesign, benefits from external experience. Having guided multiple teams through this transition, the pattern is clear: success lies not in choosing a side, but in designing a smarter, more resilient system that lets both people and technology do what they do best.