You've built a collection of articles, product listings, or forum threads. Now you face a fundamental architectural decision. Should you present it as a single, long page (static), or split it into a sequence of smaller, linked pages (paginated)? This choice influences everything from core web vitals and crawl budget to user engagement and conversion paths. Getting it wrong can silently drain your SEO equity and frustrate your audience. This analysis moves beyond the surface-level debate to examine the concrete operational impacts, implementation pitfalls, and long-term maintenance considerations for each approach. We will focus on practical outcomes, drawing from common audit findings and project patterns to guide your decision.

Defining the battle lines: what we mean by static and paginated delivery

Let's avoid ambiguity from the start. Static content delivery, in this context, refers to serving an entire content set from a single URL. A user scrolls through all 150 product reviews, all 50 blog comments, or a complete 10,000-word guide without clicking to load more or navigate to a 'page 2'. This is often achieved via infinite scroll, a 'load more' button that fetches content client-side, or simply a very long HTML document.

Paginated content delivery breaks that set into discrete chunks, each accessible via a unique URL (e.g., /reviews/, /reviews/page/2/, /reviews/page/3/). Users navigate via numbered links, 'next/previous' buttons, or sometimes a 'view more' pattern that loads subsequent pages while updating the URL. The key distinction is the creation of multiple, indexable pages for a single logical content series.

A common misconception is that pagination is inherently bad for SEO. The reality is more nuanced. Both patterns have legitimate uses, and their SEO success depends entirely on correct implementation and alignment with user intent. The friction begins when the technical execution fails to support the chosen content model.

SEO implications: crawl efficiency, link equity, and content cannibalization

Consider a search engine bot arriving at your paginated product category. It finds 200 pages of products, each page linking to the next. If those pages are thin, nearly identical apart from a shifting set of 12 products, you've just consumed a significant portion of your crawl budget on low-value variations. This dilutes the bot's ability to find your unique, important content. Pagination, without careful signals, can create a crawl trap.

Static delivery consolidates all authority and links to one URL. There's no dilution. All ranking signals point to a single destination. However, this creates a different challenge. If that single page hosts a massive volume of content that changes frequently, search engines may struggle to understand its primary topic or may index it slowly if recrawls are infrequent. The sheer size can also impact core web vitals, which we will address separately.

Link equity distribution is the critical financial ledger. With pagination, you must decide how to distribute 'link juice' from external backlinks and internal navigation. Does Page 1 pass most of the value to Page 2? Should all pages link back to a 'View All' page? Missteps here can leave later pages in a series virtually invisible to search engines. The rel='next' and rel='prev' directives, once a standard, are now deprecated by Google. Their official guidance now centers on using clear, crawlable links, a self-referential canonical tag on each page, and ensuring each page provides unique value.

The canonicalization challenge

For paginated content, each page should canonicalize to itself. This tells search engines, 'This page of results is a distinct entity.' For a static 'View All' page that duplicates paginated content, it must canonicalize to itself while the paginated series should likely use a noindex tag or a canonical pointing to the 'View All' to avoid duplication. Getting this matrix wrong is a frequent source of self-created duplicate content penalties in technical audits.

User experience and conversion metrics: engagement versus completion

A marketing team wants users to see all 50 testimonials to build trust. A single, scrollable page seems ideal. Yet, analytics reveal a problem. The scroll depth graph shows a steep drop-off after the first few testimonials. The page is so long users feel overwhelmed and abandon it. The goal of showcasing volume backfires because it lacks manageable information architecture.

Pagination, by breaking content into chunks, provides clear milestones. A user on 'Page 3 of 10' understands their progress. This can increase perceived completion rates and encourage continued navigation. However, each click is a friction point, an opportunity for the user to exit. For tasks requiring continuous reading or comparison, like a long tutorial or a product spec sheet, forcing a click between sections is disruptive and often leads to higher bounce rates from those interior pages.

The decision hinges on the primary user task. Is it browsing or processing? Browsing items, like news articles or forum topics, often suits pagination. Processing contiguous information, like a legal document or a buying guide, typically favors static delivery. Observe your own analytics. High exit rates on page 2 of a paginated series suggest users' needs were met on page 1, or the friction to continue was too great.

Technical performance and core web vitals: the speed tax

Page speed is not just a ranking factor, it's a user expectation. A static page containing hundreds of product cards, high-resolution images, and associated scripts can become extremely heavy. This directly harms Largest Contentful Paint (LCP) and Cumulative Layout Shift (CLS). While lazy loading images can help, the initial HTML payload and render-blocking resources for a massive page can create unacceptable delays, especially on mobile networks.

Paginated pages are leaner by design. Each page loads quickly because it contains less content. This is excellent for Core Web Vitals scores on a per-page basis. However, the cumulative experience changes. The user who needs to view 40 items now incurs the LCP penalty 4 times across 4 page loads, plus the latency of each navigation. The total time to consume the content may be higher, and each new page load resets the LCP clock for that URL.

Advanced implementations like hybrid approaches exist. A site might load the first page statically for speed and SEO, then use JavaScript to fetch and append subsequent pages as the user scrolls, all while updating the URL using the History API. This attempts to marry the SEO benefits of a crawlable first page with the performance and UX benefits of incremental loading. It's also more complex to implement without breaking crawlability or creating indexing issues with the dynamically loaded content.

Infrastructure and cache considerations

A heavily paginated, high-traffic blog index page can strain your database with frequent queries for different page offsets. Static pages, particularly if they can be cached as full HTML documents on a CDN, serve incredibly fast and reduce server load. But caching a massive, personalized, or frequently updated static page is difficult. The invalidation logic becomes complex. Paginated content is often easier to cache in fragments.

Implementation patterns and frequent audit failures

In practice, most problems arise not from choosing pagination or static delivery, but from implementing them poorly. Let's walk through common failure modes observed in site audits.

A frequent pagination error is creating paginated pages that are essentially empty. If you have 12 items per page and only 13 total items, page 2 contains a single item. This creates a poor user experience and a thin content page. Logic should suppress pagination when the item count barely exceeds the per-page limit. Another critical flaw is missing 'page' pagination links from the first page. If search engines or users cannot find the link to page 2, it will not be crawled or indexed.

For static delivery via infinite scroll, the cardinal sin is failing to create linkable, crawlable access to content that loads later. When a user scrolls and content appears via JavaScript, search engines may not see it unless you implement a 'server-side rendered' first view and use a 'linkable fragments' pattern. Similarly, a 'Load More' button that doesn't change the URL state means content fetched is only accessible during that single session. It cannot be linked to, shared, or indexed.

Both approaches mishandle internal linking. Sites with pagination often forget to interlink paginated pages contextually within the content itself. Sites with massive static pages frequently lack a detailed table of contents with anchor links, denying users and search engines a clear map of the page's structure.

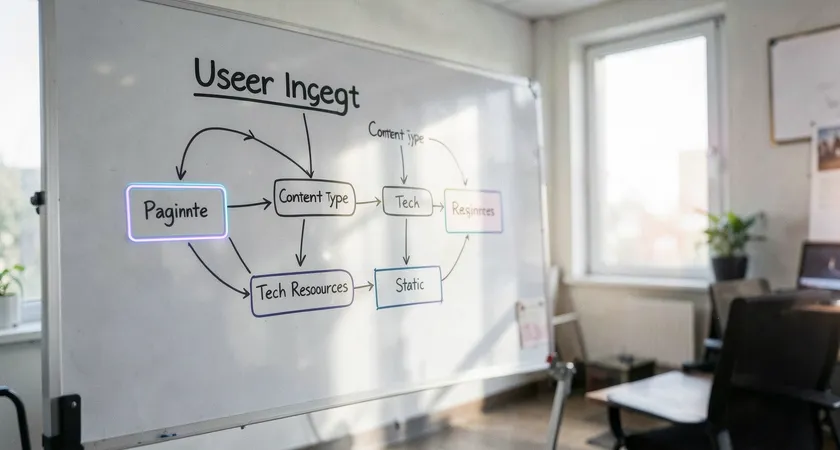

Making the strategic choice: a framework for your project

There is no universal best choice. The correct answer depends on your specific content, users, and technical capabilities. Use this framework to guide your decision.

First, analyze the primary user intent. Search for your own target keywords. Do the top results tend to be long, comprehensive guides (suggesting static is expected), or are they paginated category pages? Use tools to check the search result features. Do you see 'site links' pointing to different sections of a long page? That's a clue about how Google understands the content.

Second, audit your resources. Do you have the front-end and back-end expertise to implement a performant infinite scroll with SEO-friendly URL management? Or is a simpler, server-side paginated system more within your team's reliable execution scope? A technically brilliant static page that kills your LCP score is worse than a simple, fast paginated series.

Third, consider content velocity and maintenance. A paginated news archive is logical because new items push old ones to new pages. A static encyclopedia entry is logical because it is a fixed, curated resource. How often will the content change, and who will update it? Changing a site-wide pagination parameter (e.g., from 10 to 25 items per page) can have significant SEO repercussions, as it changes every URL in the series.

For most, the pragmatic path involves hybrid or context-specific choices. Use pagination for browse-based archives, search results, and forums where users are filtering and exploring. Use static delivery for substantive, process-based content like tutorials, whitepapers, and product specifications that are consumed linearly. E-commerce sites often use both: paginated category browsing, but static, lengthy product description pages.

When to seek expert guidance on architecture

The line between a standard implementation and a complex one is thin. You might start with a simple paginated blog roll, only to later add filtering, sorting, and tags. Suddenly, your pagination logic interacts with multiple parameters, creating combinatorial explosion in URL possibilities and duplicate content risks. These scaling complexities are where in-house teams often get bogged down in reactive fixes.

Similarly, migrating from a paginated system to a static one, or vice versa, is a high-risk SEO operation. It requires meticulous planning of 301 redirects, canonical tag updates, and content reprocessing. Without a clear map of how search engines index your current structure and how users interact with it, such a migration can lead to significant traffic loss. This is a scenario where experience from having managed similar migrations is invaluable, as the pitfalls are often not obvious until you're dealing with the downturn in organic visibility.

The underlying theme is that content delivery is a foundational layer. It's not a superficial template choice. Its interaction with SEO, performance, and user psychology is profound. While platforms and plugins offer basic solutions, tailoring them to your unique content ecosystem and business goals often requires a depth of cross-disciplinary knowledge spanning information architecture, front-end performance, and search engine protocols.

Choosing between paginated and static content delivery is a strategic technical decision with lasting impact. It balances the crawl efficiency and focused authority of a single page against the user-friendly chunking and performance benefits of a series. Your decision must be rooted in a clear understanding of user tasks, content volume, and your team's ability to implement the pattern correctly. Avoid the common pitfalls of thin paginated pages, uncrawlable infinite scroll, and poor interlinking. Start by auditing what you have, model the user journey, and test for performance. Sometimes, the most effective architecture is not a pure choice, but a context-aware hybrid designed for both the bot and the human reader.