Your article ranks on page one. Traffic is stable. Then, over the course of a few months, a gradual but persistent decline begins. You check for manual actions, technical issues, and competitor backlinks, but find nothing. Often, the culprit is simpler: the content is no longer fresh in the eyes of Google's algorithms, especially for queries where timeliness is a factor. For sites that publish dynamically, such as those driven by news cycles, product updates, or SaaS documentation, maintaining perceived freshness becomes a core SEO challenge, not a one-time editorial task.

Dynamic publishing doesn't just mean frequent posting. It means your content's core value proposition can decay if not actively maintained. This guide provides actionable content freshness tips, moving beyond the basic advice to "update old posts." We'll examine how search engines evaluate freshness, the specific signals that trigger a re-crawl and re-evaluation, and a structured framework for integrating content updates into your publishing workflow. You'll learn how to systematically identify at-risk content, execute updates that search engines recognize, and avoid common pitfalls that leave refreshed pages languishing.

Why Freshness Matters Beyond the "Last Updated" Date

Google's Gary Illyes once clarified that the "last updated" date Google sometimes shows in search results isn't a direct ranking factor. This led to confusion, with some interpreting it to mean freshness doesn't matter. That's a misreading. While the displayed date may not be a direct signal, the underlying content changes that trigger that date change absolutely are. Search engines, particularly for what they classify as "freshness-sensitive" queries, constantly re-crawl and re-evaluate pages. Their goal is to determine if the information is current, accurate, and comprehensive compared to other available sources.

Think of it as a library. A book with a 2024 publication date on the spine gets more initial interest than one from 2018 for topics like "best Python frameworks." But if that 2024 book has outdated code samples, its relevance plummets. Meanwhile, a well-maintained 2018 book that has been revised with new appendix pages might still be the most valuable resource. The crawler is the librarian, constantly checking which books have new pages inserted or old chapters revised.

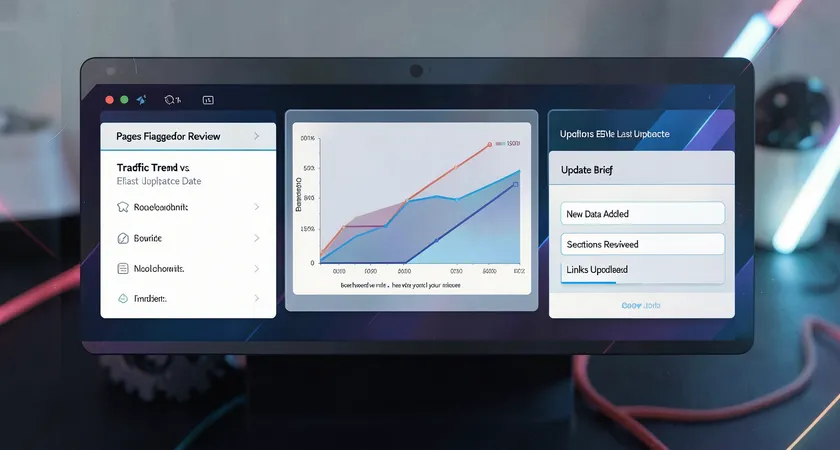

On most technical SEO audits we conduct, we observe a clear pattern: pages that have not been substantively updated in over 18 months often lose traction for competitive, mid-funnel keywords. The decline is rarely a cliff; it's a slow erosion of click-through rate and incremental loss of rankings to more recently updated competitors. The algorithm isn't punishing old content. It's gradually elevating content that demonstrates ongoing relevance through updates.

Identifying Freshness-Sensitive Content in Your Portfolio

Not all content requires the same update frequency. A cornerstone guide on "the principles of double-entry bookkeeping" has a long shelf life. A product comparison guide for "best project management software in 2024" has a built-in expiration date. You need a triage system. Start by analyzing your search console performance data for keywords that imply a time element. Look for terms like "2024," "new," "latest," "current," or "vs" (in a fast-moving industry). Pages targeting these terms are in your high-priority refresh queue.

Beyond keyword semantics, consider topic volatility. Industries like cryptocurrency, digital marketing trends, and software-as-a-service are high-volatility. Healthcare guidelines and financial regulations are medium-volatility, changing with official announcements. Gardening tips and historical analyses are low-volatility. Categorize your content pillars by this volatility metric to set realistic refresh cadences.

The Core Signals That Trigger a Freshness Re-Crawl

You change a comma on a page. Does Google care? Almost certainly not. You completely overhaul the introduction, add a new H2 section with recent data, and replace five broken outbound links with current, authoritative sources. That, Google will likely notice. To systematize updates, you must understand what prompts a search engine to not only re-crawl a page but to re-process its content and potentially adjust its ranking evaluation.

The primary signal is the change delta across the main content body. Adding or rewriting substantial paragraphs, particularly near the top of the content, is a strong cue. Changing the title tag or meta description, while important for CTR, is a weaker freshness signal for the core content itself. Another powerful signal is the update of time-sensitive references. Replacing "last year" with "this year," updating statistics from a 2021 study to a 2023 study, or revising a list of "top tools" to include a market newcomer tells the algorithm the information is being curated.

Field data suggests that structural changes also play a role. Adding new, relevant internal links from recently published pages to an older piece sends a powerful contextual signal. It acts as a vote of confidence from your own site, suggesting the older content remains a relevant destination. Conversely, if an important page suddenly receives no new internal links over a long period, it may be perceived as fading into the archive.

Here is a practical checklist for an update that aims to trigger a meaningful freshness re-evaluation:

- Update all time-bound phrases ("this year," "currently," "as of [Month] [Year]").

- Add a new section addressing a recent development or FAQ that has emerged since publication.

- Refresh at least 30% of the body text, focusing on the introduction and key explanatory paragraphs.

- Audit and update all outbound links, replacing broken or outdated links with current, authoritative sources.

- Add new internal links from at least two recently published, topically related pages.

Building a Sustainable Content Refresh Workflow

A haphazard approach to content freshness is unsustainable. Marking a calendar to "update old posts" leads to procrastination and shallow updates. The solution is to treat content maintenance as a distinct, scheduled workflow integrated into your content operations. This moves freshness from a reactive SEO task to a proactive publishing pillar.

Start with an audit cadence. For a high-volatility site, a quarterly review of top-performing and high-potential decaying pages is necessary. For lower volatility sites, a bi-annual audit may suffice. Use analytics to flag pages with a specific trend: steady traffic growth followed by a plateau or decline over the past 4-6 months. These are your prime refresh candidates, not the pages that have never performed.

The update process itself needs clear guidelines and quality gates. An editor should not just "freshen it up." They should follow a brief that mandates specific actions: update X number of statistical references, add a section on Y emerging trend, answer Z new common question from forum scans. This ensures consistency and depth. The output is not just a changed page, but a page with a documented increase in comprehensiveness and timeliness.

Automating Triggers and Alerts

Manual audits are foundational, but scaling requires automation. This is where API-driven workflows become critical. You can set up systems to automatically flag content based on predefined rules. For instance, a script can run monthly to identify pages containing phrases like "in 2022" or "last year's report." It can cross-reference these with pages that have seen a drop in average ranking position, creating a prioritized alert list for the editorial team.

Another advanced tactic is monitoring competitor updates. Tools can track when a competing article in the SERPs receives a significant update. If three of your top five competitors refresh their articles on a given topic within a quarter, that's a strong market signal that your own content needs attention. This competitive intelligence can be fed into your editorial planning system to justify and prioritize refresh work.

For sites with thousands of pages, a manual approach collapses. The workflow must be integrated into the CMS itself. Editorial calendars should have recurring tasks for content reviews, not just new publishes. Version history should be maintained not just for rollbacks, but to document the scope and date of SEO-driven refreshes for future analysis.

Common Pitfalls: When Freshness Efforts Backfire

Many teams, with the best intentions, execute freshness updates in ways that can harm more than help. The most frequent error is the cosmetic update. This involves changing the publication date to the current day without making substantive content changes. Search engines are increasingly adept at detecting this. If the crawler finds the new date but identical or nearly identical content, it may disregard the date change and, in some cases, interpret it as an attempt to manipulate rankings. The perceived freshness signal is lost, and trust is eroded.

A subtler pitfall is the update that dilutes quality. In an effort to add something new, a writer might insert a tangential paragraph or a low-value section that disrupts the article's original focus and user intent. The page becomes longer but less coherent. The freshness signal might be registered, but the page's quality score could drop, leading to a net negative result. Every update should pass the same editorial quality bar as a new publication.

Technical missteps also abound. A common scenario is when a page is updated and republished, but its XML sitemap entry isn't updated with a new <lastmod> date (though note that Google treats this as a hint, not a directive). More critically, if the CMS creates a new URL and redirects the old one, you lose all the equity of the original page. The update must happen in place on the same canonical URL. Always verify that your CMS is configured to update posts, not create revisions that are published as new entities.

Finally, there's the resource pitfall. A team embarks on an ambitious "update all posts from 2020" project. They exhaust their editorial capacity for three months, publish no new topical content, and the SEO impact of the updates is mixed and slow to materialize. This burns out the team and stalls momentum. A balanced, ongoing workflow that allocates a fixed percentage of resources (e.g., 20% of editorial time) to maintenance is far more sustainable and effective.

Moving Beyond Manual Work: Scaling Freshness for Large Sites

For a blog with a few hundred posts, a quarterly manual audit is feasible. For an enterprise site with tens of thousands of product pages, help articles, and blog posts, it's impossible. At this scale, the content freshness model must be architectural. It shifts from updating individual pieces to designing systems where content is inherently fresh because of how it's built and delivered.

One approach is dynamic content injection. Consider a page with a static, evergreen core, like a guide to API authentication. Embedded within that page, via an API call, could be a live-updated module showing "Recent Security Advisories" or "Latest Version History." The core page is crawled and indexed, but the dynamic module provides a stream of freshness signals every time it's updated at the source. This separates the stable, rankable container from the time-sensitive data within it.

Another scalable method is leveraging structured data to highlight freshness. For articles, the Article schema with datePublished and dateModified is crucial. Ensuring your CMS automatically updates the dateModified property on every substantive edit provides a clear machine-readable signal. For product pages, the AggregateOffer schema with price and availability updates can serve as potent freshness indicators for commercial queries.

The ultimate scaling mechanism is a headless CMS or a custom platform with webhook-driven publishing. In these systems, an update in a primary data source (a product database, a research repository) can trigger a pre-defined content update workflow. For example, when a SaaS company releases version 2.5 of its software, an event can automatically flag all related help documentation for review, generate update tasks for writers, and even publish minor changes (like version numbers) across all affected pages instantly. The content engine is not just publishing; it's listening and responding to change events.

Maintaining content freshness in a dynamic publishing environment is a continuous commitment, not a project with an end date. It requires understanding the nuanced signals search engines use to gauge timeliness, moving beyond superficial date changes to substantive revisions, and building a repeatable process that integrates maintenance into the content lifecycle. The most common failure point isn't a lack of effort; it's the attempt to manage freshness reactively through sheer manual labor, which becomes a bottleneck as content volume grows.

The practical next step is to conduct a targeted audit. Don't look at your entire archive. Select one high-value content pillar that you know is in a volatile space. Analyze the top three performing pages in that pillar. Systematically apply the deep-update checklist from this guide: refresh time references, add a new relevant section, update links. Monitor the crawl and ranking behavior of those pages over the next 45 days. This controlled experiment will provide your own site-specific data on what a meaningful freshness update looks like for your audience and your industry.

For organizations where content is a primary growth channel, the complexity of scaling these processes, integrating analytics, automation, and quality editorial work, often justifies a specialized approach. The technical integration of freshness signals, audit workflows, and update deployment is a significant systems challenge. It's the kind of operational layer where dedicated platforms and expert implementation shift the focus from perpetual maintenance to strategic, sustained relevance.