You just got the first draft from your new automated content engine. It ticks the SEO boxes, links to your target landing pages, and is formatted perfectly. But the tone is slightly off-brand, a key claim lacks a supporting source, and the call to action feels generic. This is the moment editorial control matters. Automated publishing isn't about removing human judgment, but about structuring and scaling it. Effectively managing editorial oversight in these workflows is what separates a chaotic stream of content from a strategic asset that builds authority and converts. Let's examine the practical systems and guardrails needed to align automated output with real-world editorial standards.

Defining what editorial control means for your automation pipeline

Editorial control in automated publishing isn't a single switch. It's a layered system that governs content before, during, and after its automated generation. Many teams mistake it for a simple pre-approval step, which creates bottlenecks and defeats the purpose of automation. True control is about embedding your standards into the system itself. This starts with a clear documentation of what those standards are.

Consider your brand voice. An automated tool might be instructed to be "professional." That's too vague. Instead, break it down into operational rules. Does professional mean avoiding contractions? Does it prefer active voice in more than 70% of sentences? Should it use specific industry terminology over more general synonyms? The more you can codify your voice into discrete, machine-readable guidelines, the more control you delegate effectively to the system. This extends to SEO requirements, legal disclaimers, and sourcing protocols. Without this foundational layer, every piece of output requires heavy manual correction.

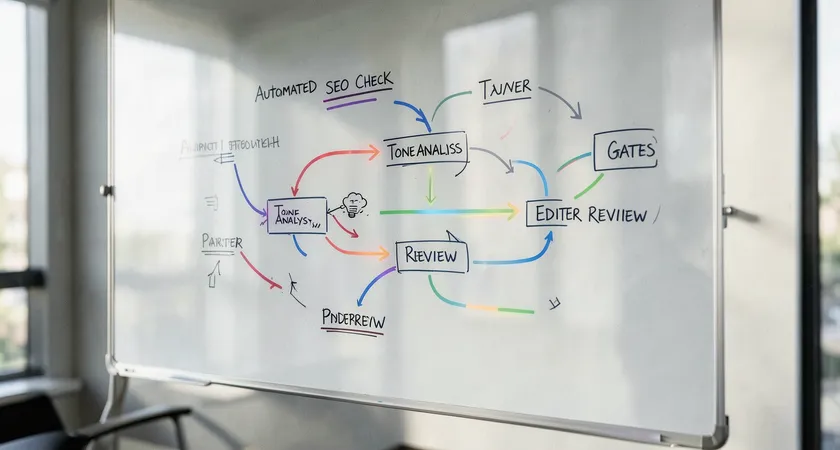

The three pillars of procedural oversight

A sustainable oversight model rests on three interconnected pillars. The first is input control. This involves curating the data sources, brand assets, and briefs that feed the automation. A flawed brief guarantees flawed output. The second pillar is process control. This consists of the automated checks, templates, and structured workflows that standardize creation. Think of it as the assembly line's quality gates. The final pillar is output control, the human review and approval stages that catch nuance, creativity, and strategic alignment the machine may miss. The goal is to shift the bulk of effort to input and process control, making the final human review faster and more focused on high-value judgment calls.

Implementing practical guardrails without creating bottlenecks

Picture a marketing manager who must personally approve every single social media post scheduled by their team's tool. That's a bottleneck. The same dynamic kills the efficiency gains of content automation if guardrails are poorly designed. The solution is to implement tiered levels of oversight based on content risk and value. Not every piece requires the same scrutiny.

Start by categorizing your content. High-stakes pieces, like cornerstone SEO articles explaining your core service or a blog post making a bold market claim, might require full editorial review by a subject matter expert. Medium-risk content, such as standard product update announcements or middle-of-funnel comparison guides, could go through a junior editor or use a peer-review system within the team. Low-risk, high-volume content, like meta description generation or FAQ page population, might only need a spot-check audit after being published live. This risk-based approach allocates precious human attention where it has the most impact on quality and brand safety.

The role of templates and structured data

Templates are the unsung heroes of scalable editorial control. A well-designed template does more than enforce formatting, it guides the narrative and embeds compliance. For an automated system, a template could mandate sections like "Key Takeaway," "Supporting Data," and "Next Steps," ensuring a consistent reader experience. More advanced control uses structured data fields. Instead of a blank canvas for an introduction, the system prompts for a "Primary Keyword" field, a "Problem Statement" field, and a "Reader Promise" field. This structures the input, making the output more predictable and easier to validate automatically against your style guide. It transforms creative writing, in part, into a form of data entry governed by clear rules.

Common pitfalls teams encounter and how to sidestep them

One frequent pitfall is the "set and forget" mentality. Teams invest time in initial setup, create a few templates, and then let the system run unattended. In practice, content trends, search engine algorithms, and brand messaging evolve. Without a scheduled review cycle for your automation rules and output, your content slowly drifts out of alignment. Establish a quarterly audit. Pull a random sample of published automated content and grade it against your current standards. This isn't about blaming the tool, it's about refining the instructions you're giving it.

Another common issue is a disconnect between the SEO team setting the keywords and the legal or compliance team concerned about claims. Automated publishing can amplify this silo problem at high speed. The guardrail here is a shared, consolidated rulebook. Your keyword targeting parameters and your compliance-approved phrasing for specific topics must exist in the same source of truth that feeds the automation. When these rules conflict, as they often do, the conflict must be resolved by humans before it's encoded into the system. Letting the automation try to reconcile contradictory directives leads to unusable or risky content.

When and why even robust DIY systems reach their limits

You've built a solid framework: tiered reviews, detailed templates, and quarterly audits. Your automated content is consistent and mostly on-brand. Then you decide to expand into a new regional market or a completely different product vertical. Suddenly, your carefully crafted rulebook feels inadequate. The nuances of a new audience, local search intent variations, and unfamiliar competitive landscapes expose the brittleness of a system built on a single set of assumptions. Scaling editorial control across diverse use cases often requires a level of system sophistication and maintenance that overwhelms internal teams.

This is the natural limit of many DIY approaches. The ongoing labor required to update taxonomies, refine language models for new topics, and integrate with an ever-changing martech stack becomes a full-time job. The hidden cost shifts from manually writing content to manually engineering and curating the automation system itself. Teams find themselves maintaining a complex internal tool rather than focusing on core marketing strategy. At this point, the question becomes whether that investment in internal platform management is the best use of your team's expertise.

The expertise gap in maintaining language quality

Beyond technical maintenance, there's a qualitative ceiling. Automated systems excel at following explicit rules but struggle with implicit knowledge, creative flair, and the evolving subtleties of human language. An internal team might know the rule "use term X, not term Y," but they may lack the deep linguistic or SEO expertise to understand why that rule exists or how to adapt it as language changes. Maintaining the quality of the language model, ensuring it produces not just grammatically correct but engaging and strategically nuanced text, is a specialized discipline. It sits at the intersection of computational linguistics, SEO, and content strategy, a skillset rarely found in-house at the depth required for enterprise-scale output.

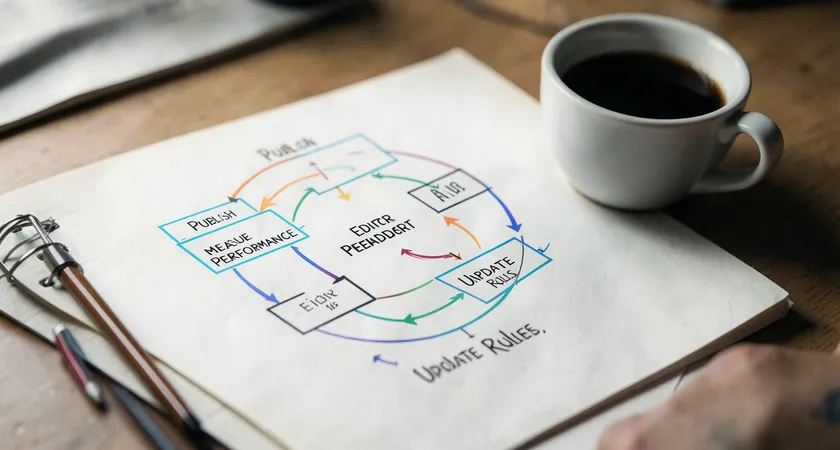

Building a sustainable model for scaled quality assurance

Sustainability in automated publishing means designing a control system that learns and improves without exponential growth in human labor. The cornerstone of this is a closed feedback loop. Every piece of content published should feed data back into the system. This includes performance metrics like organic traffic and engagement time, but also qualitative feedback from editors flagged during review. If editors consistently change a particular phrasing generated by the system, that pattern should be detectable and the underlying rule should be updated.

This moves quality assurance from a purely defensive, gatekeeping role to a proactive, tuning function. The editorial team's insights become the training data that makes the system smarter. Implementing this technically might involve maintaining a shared log of overrides and exceptions, which then informs periodic retraining or prompt engineering sessions. The sustainable model acknowledges that initial rules will be imperfect. It therefore prioritizes the mechanism for identifying and correcting those imperfections efficiently over time, turning editorial control into a continuous refinement process rather than a static filter.

Managing editorial control in automated publishing is an ongoing exercise in balance. It's about finding the equilibrium between scalable efficiency and unwavering quality. The most successful implementations treat automation not as a writer replacement, but as a powerful collaborator that handles consistency and volume under clear, evolving human direction. The control systems you build define the ceiling for what your automated content can achieve. They ensure that every article, product description, or meta tag not only ranks but also resonates, builds trust, and accurately represents your brand. The next step is to audit your current workflow, map out where editorial decisions are made, and ask a simple question: which of these decisions can we codify, and which must remain a uniquely human judgment call for the foreseeable future?